ResNetV1 - Deep Residual Learning for Image Recognition - 2015

ResNetV2 - Identity Mappings in Deep Residual Networks - 2016

1. ResNetV1

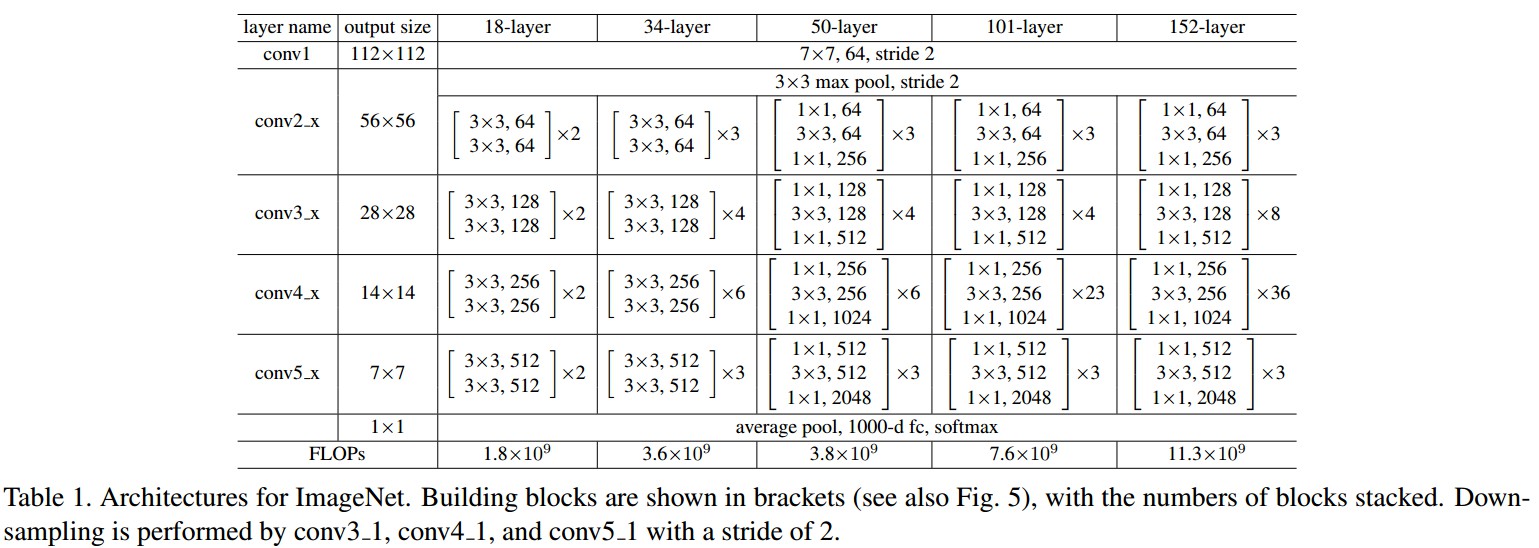

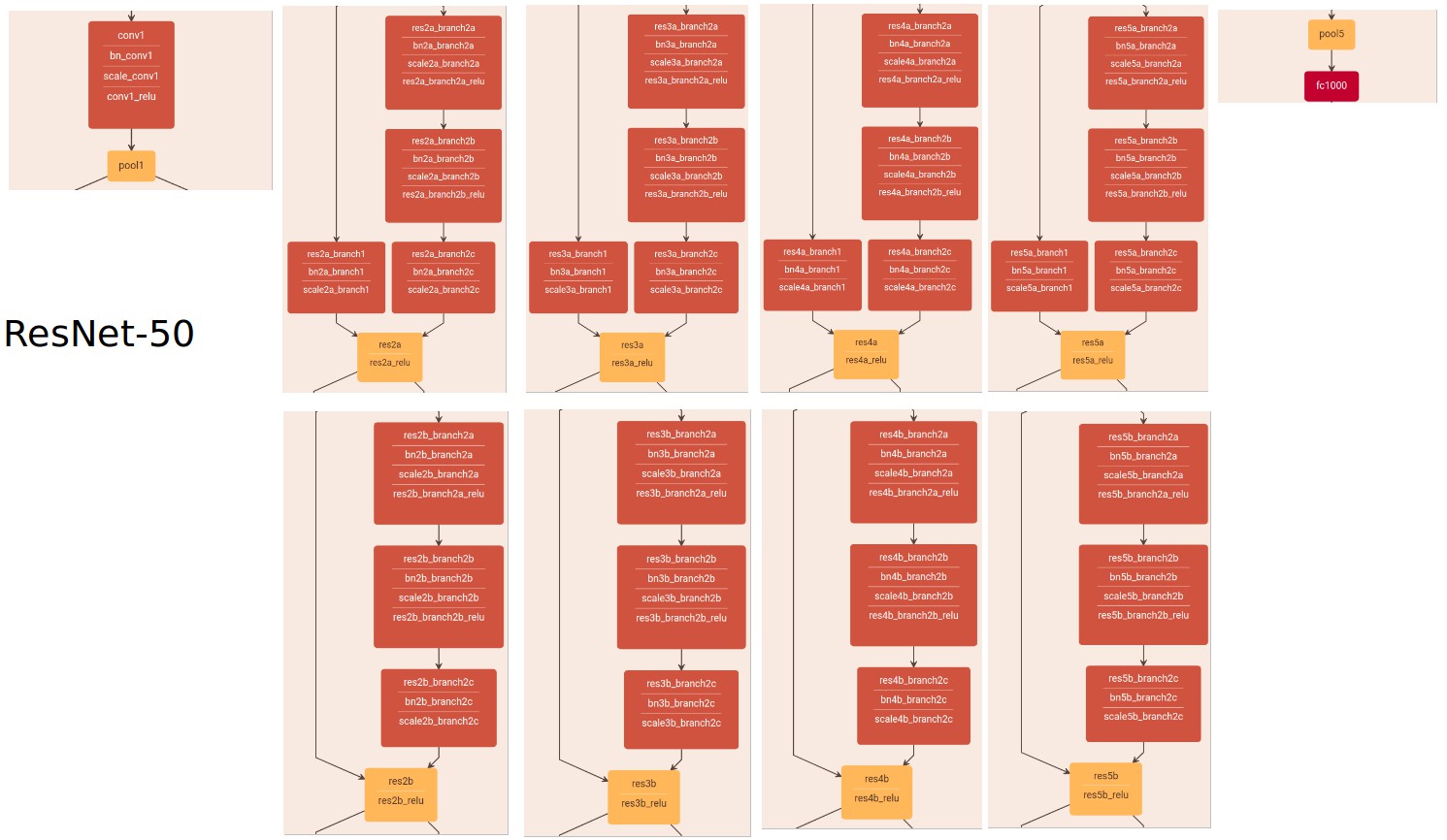

ResNetV1 论文中给出的网络结构:

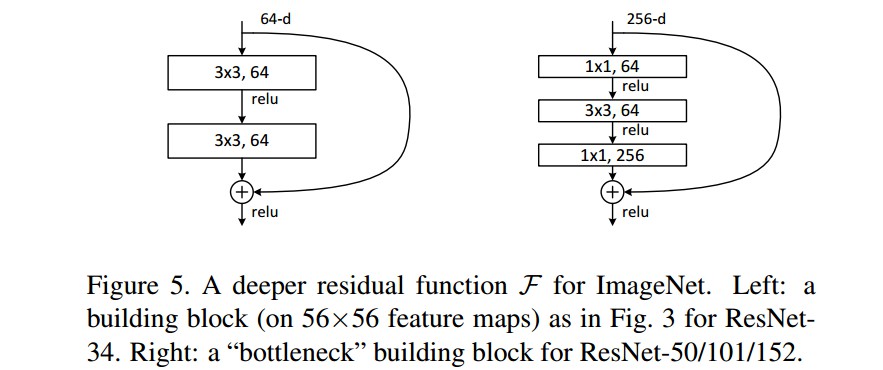

Table1 中,ResNet-18 和 ResNet-34 采用 Figure5(左) 的两层 bottleneck 结构;ResNet-50,ResNet-101 和 ResNet-152 采用 Figure5(右) 的三层 bottleneck 结构.

Tabel1 中的方括号右边乘以的数字,如,2,3,4,5,8,表示 bottleneck 的个数. 如 ResNet-101 的 conv4_x 中乘以36,则,该 block 包含 23 个 bottleneck.

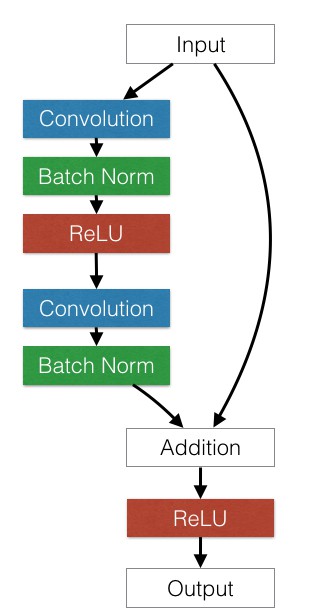

残差单元:

1.1. PyTorch中的定义

import torch.nn as nn

import math

import torch.utils.model_zoo as model_zoo

__all__ = ['ResNet', 'resnet18', 'resnet34', 'resnet50', 'resnet101', 'resnet152']

model_urls = {

'resnet18': 'https://download.pytorch.org/models/resnet18-5c106cde.pth',

'resnet34': 'https://download.pytorch.org/models/resnet34-333f7ec4.pth',

'resnet50': 'https://download.pytorch.org/models/resnet50-19c8e357.pth',

'resnet101': 'https://download.pytorch.org/models/resnet101-5d3b4d8f.pth',

'resnet152': 'https://download.pytorch.org/models/resnet152-b121ed2d.pth',

}

def conv3x3(in_planes, out_planes, stride=1):

"""3x3 convolution with padding"""

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=False)

class BasicBlock(nn.Module):

# Figure5(左) Block

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class Bottleneck(nn.Module):

# Figure5(右) Block

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,

padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * self.expansion, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000):

self.inplanes = 64

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

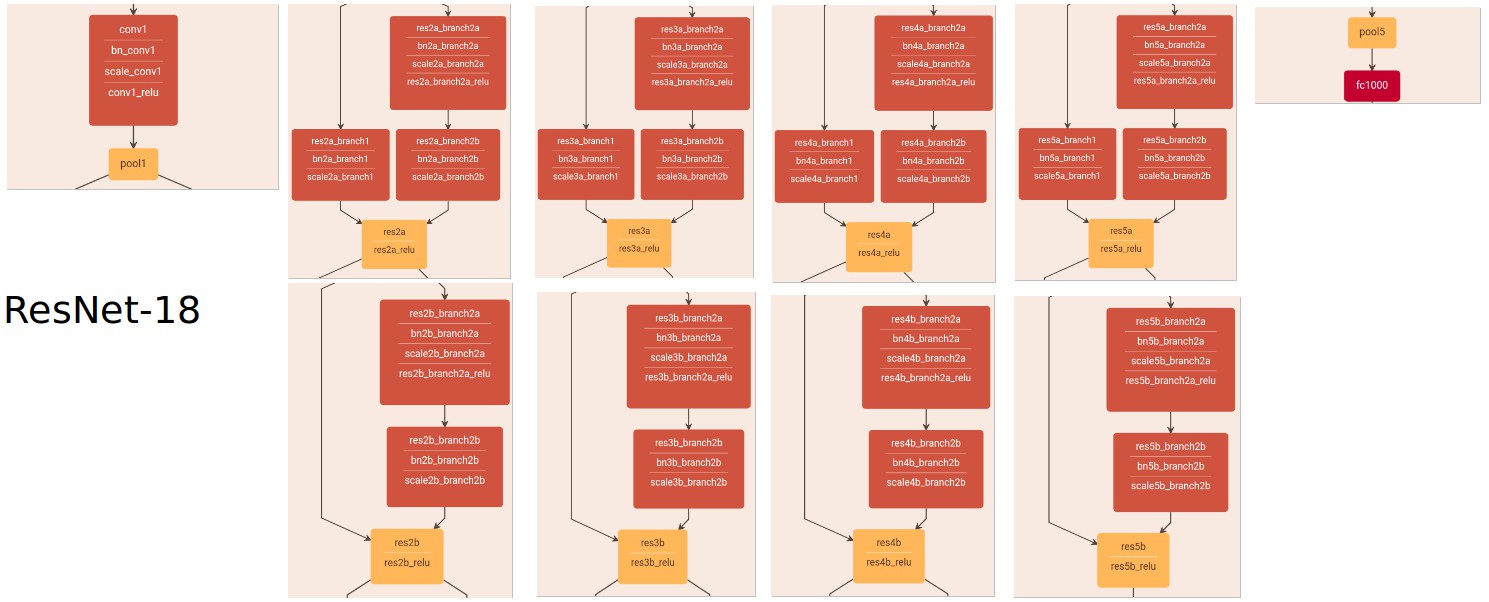

def resnet18(pretrained=False, **kwargs):

"""Constructs a ResNet-18 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [2, 2, 2, 2], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet18']))

return model

def resnet34(pretrained=False, **kwargs):

"""Constructs a ResNet-34 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [3, 4, 6, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet34']))

return model

def resnet50(pretrained=False, **kwargs):

"""Constructs a ResNet-50 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 6, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet50']))

return model

def resnet101(pretrained=False, **kwargs):

"""Constructs a ResNet-101 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 23, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet101']))

return model

def resnet152(pretrained=False, **kwargs):

"""Constructs a ResNet-152 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 8, 36, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet152']))

return model

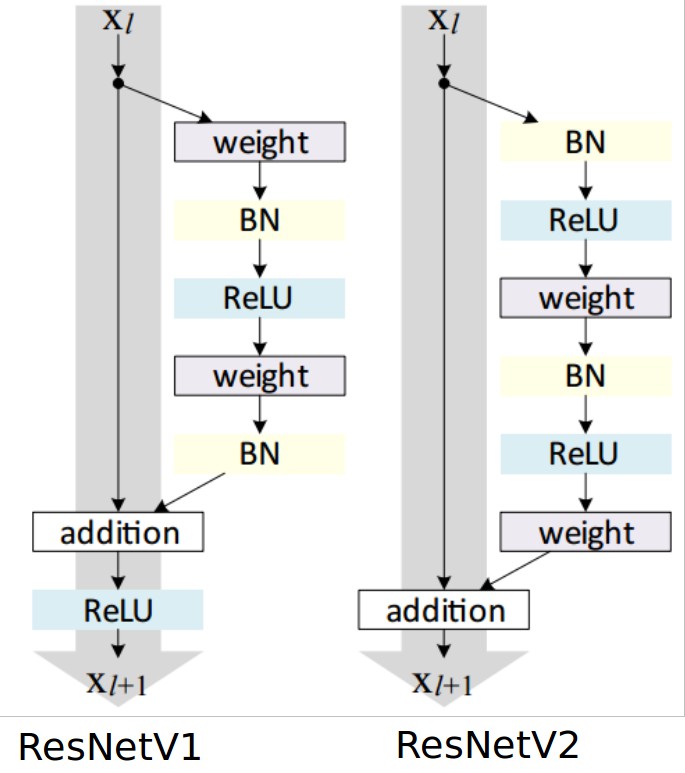

2. ResNetV2

ResNetV1 和 ResNetV2 残差单元对比:

何凯明给出的 resnet-1k-layers bottleneck 的 lua 实现:

local function bottleneck(nInputPlane, nOutputPlane, stride)

local nBottleneckPlane = nOutputPlane / 4

if nInputPlane == nOutputPlane then -- most Residual Units have this shape

local convs = nn.Sequential()

-- conv1x1

convs:add(SBatchNorm(nInputPlane))

convs:add(ReLU(true))

convs:add(Convolution(nInputPlane,nBottleneckPlane,1,1,stride,stride,0,0))

-- conv3x3

convs:add(SBatchNorm(nBottleneckPlane))

convs:add(ReLU(true))

convs:add(Convolution(nBottleneckPlane,nBottleneckPlane,3,3,1,1,1,1))

-- conv1x1

convs:add(SBatchNorm(nBottleneckPlane))

convs:add(ReLU(true))

convs:add(Convolution(nBottleneckPlane,nOutputPlane,1,1,1,1,0,0))

local shortcut = nn.Identity()

return nn.Sequential()

:add(nn.ConcatTable()

:add(convs)

:add(shortcut))

:add(nn.CAddTable(true))

else -- Residual Units for increasing dimensions

local block = nn.Sequential()

-- common BN, ReLU

block:add(SBatchNorm(nInputPlane))

block:add(ReLU(true))

local convs = nn.Sequential()

-- conv1x1

convs:add(Convolution(nInputPlane,nBottleneckPlane,1,1,stride,stride,0,0))

-- conv3x3

convs:add(SBatchNorm(nBottleneckPlane))

convs:add(ReLU(true))

convs:add(Convolution(nBottleneckPlane,nBottleneckPlane,3,3,1,1,1,1))

-- conv1x1

convs:add(SBatchNorm(nBottleneckPlane))

convs:add(ReLU(true))

convs:add(Convolution(nBottleneckPlane,nOutputPlane,1,1,1,1,0,0))

local shortcut = nn.Sequential()

shortcut:add(Convolution(nInputPlane,nOutputPlane,1,1,stride,stride,0,0))

return block

:add(nn.ConcatTable()

:add(convs)

:add(shortcut))

:add(nn.CAddTable(true))

end

end