1. 基于 OpenCV 和 dlib 的实时人脸特征点检测

原文:Detect eyes, nose, lips, and jaw with dlib, OpenCV, and Python - 2017.04.10

原文:Real-time facial landmark detection with OpenCV, Python, and dlib - 2017.04.17

安装 imutils 和 dlib:

pip install dlib

pip install imutils

#或

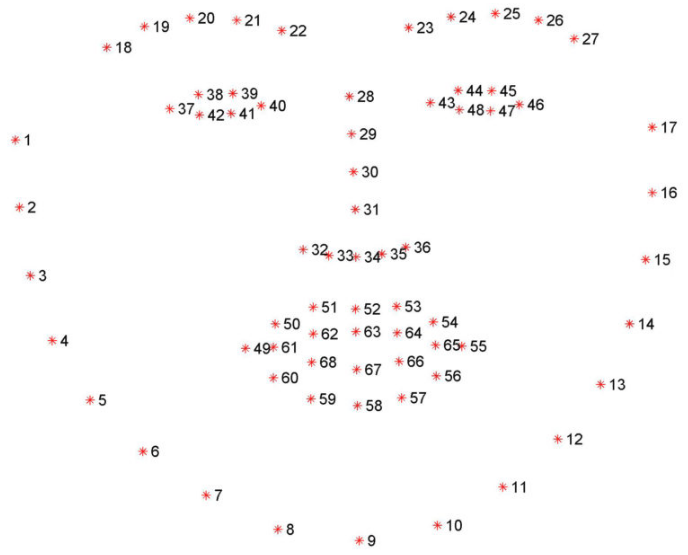

pip install --upgrade imutilsdlib 库检测的人脸特征点如图:

以视频流人脸特征点检测为例 - video_facial_landmarks.py:

from imutils.video import VideoStream

from imutils import face_utils

import datetime

import argparse

import imutils

import time

import dlib

import cv2

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-p", "--shape-predictor", required=True,

help="path to facial landmark predictor")

ap.add_argument("-r", "--picamera", type=int, default=-1,

help="whether or not the Raspberry Pi camera should be used")

args = vars(ap.parse_args())

# initialize dlib's face detector (HOG-based) and then create

# the facial landmark predictor

print("[INFO] loading facial landmark predictor...")

detector = dlib.get_frontal_face_detector()

predictor = dlib.shape_predictor(args["shape_predictor"])

# initialize the video stream and allow the cammera sensor to warmup

print("[INFO] camera sensor warming up...")

vs = VideoStream(usePiCamera=args["picamera"] > 0).start()

time.sleep(2.0)

# loop over the frames from the video stream

while True:

# grab the frame from the threaded video stream, resize it to

# have a maximum width of 400 pixels, and convert it to

# grayscale

frame = vs.read()

frame = imutils.resize(frame, width=400)

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

# detect faces in the grayscale frame

rects = detector(gray, 0)

# import the necessary packages

from imutils.video import VideoStream

from imutils import face_utils

import datetime

import argparse

import imutils

import time

import dlib

import cv2

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-p", "--shape-predictor", required=True,

help="path to facial landmark predictor")

ap.add_argument("-r", "--picamera", type=int, default=-1,

help="whether or not the Raspberry Pi camera should be used")

args = vars(ap.parse_args())

# initialize dlib's face detector (HOG-based) and then create

# the facial landmark predictor

print("[INFO] loading facial landmark predictor...")

detector = dlib.get_frontal_face_detector()

predictor = dlib.shape_predictor(args["shape_predictor"])

# initialize the video stream and allow the cammera sensor to warmup

print("[INFO] camera sensor warming up...")

vs = VideoStream(usePiCamera=args["picamera"] > 0).start()

time.sleep(2.0)

# loop over the frames from the video stream

while True:

# grab the frame from the threaded video stream, resize it to

# have a maximum width of 400 pixels, and convert it to

# grayscale

frame = vs.read()

frame = imutils.resize(frame, width=400)

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

# detect faces in the grayscale frame

rects = detector(gray, 0)

# loop over the face detections

for rect in rects:

# determine the facial landmarks for the face region, then

# convert the facial landmark (x, y)-coordinates to a NumPy

# array

shape = predictor(gray, rect)

shape = face_utils.shape_to_np(shape)

# loop over the (x, y)-coordinates for the facial landmarks

# and draw them on the image

for (x, y) in shape:

cv2.circle(frame, (x, y), 1, (0, 0, 255), -1)

# show the frame

cv2.imshow("Frame", frame)

key = cv2.waitKey(1) & 0xFF

# if the `q` key was pressed, break from the loop

if key == ord("q"):

break

# import the necessary packages

from imutils.video import VideoStream

from imutils import face_utils

import datetime

import argparse

import imutils

import time

import dlib

import cv2

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-p", "--shape-predictor", required=True,

help="path to facial landmark predictor")

ap.add_argument("-r", "--picamera", type=int, default=-1,

help="whether or not the Raspberry Pi camera should be used")

args = vars(ap.parse_args())

# initialize dlib's face detector (HOG-based) and then create

# the facial landmark predictor

print("[INFO] loading facial landmark predictor...")

detector = dlib.get_frontal_face_detector()

predictor = dlib.shape_predictor(args["shape_predictor"])

# initialize the video stream and allow the cammera sensor to warmup

print("[INFO] camera sensor warming up...")

vs = VideoStream(usePiCamera=args["picamera"] > 0).start()

time.sleep(2.0)

# loop over the frames from the video stream

while True:

# grab the frame from the threaded video stream, resize it to

# have a maximum width of 400 pixels, and convert it to

# grayscale

frame = vs.read()

frame = imutils.resize(frame, width=400)

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

# detect faces in the grayscale frame

rects = detector(gray, 0)

# loop over the face detections

for rect in rects:

# determine the facial landmarks for the face region, then

# convert the facial landmark (x, y)-coordinates to a NumPy

# array

shape = predictor(gray, rect)

shape = face_utils.shape_to_np(shape)

# loop over the (x, y)-coordinates for the facial landmarks

# and draw them on the image

for (x, y) in shape:

cv2.circle(frame, (x, y), 1, (0, 0, 255), -1)

# show the frame

cv2.imshow("Frame", frame)

key = cv2.waitKey(1) & 0xFF

# if the `q` key was pressed, break from the loop

if key == ord("q"):

break

# do a bit of cleanup

cv2.destroyAllWindows()

vs.stop()运行:

$ python video_facial_landmarks.py \

--shape-predictor shape_predictor_68_face_landmarks.dat如:

2. 基于 OpenCV 和 dlib 的眨眼检测

原文:Eye blink detection with OpenCV, Python, and dlib -2017.04.24

图像识别的新思路:眼睛纵横比,玩转识别眨眼动作. 基于面部特征检测和计算视频流中的眨眼次数.

眨眼次数检测器,将对眼睛长宽比(eye aspect ratio, EAR)进行计算,可参考 2016年的论文Real-Time Eye Blink Detection Using Facial Landmarks.

传统计算眨眼次数的图像处理方法,主要包括:

[1] - 眼睛定位(eye localiatioon)

[2] - 设定寻找眼睛白色部分的阈值(Thresholding to find the whites of the eyes.)

[3] - 判断眼睛白色区域消失的周期次数(消失一次,表示眨眼一次).

计算眼睛的长宽比(EAR) 的方式是一种更简洁优雅的方式,其主要涉及到基于眼睛的面部特征点之家安的距离比例的简单计算.

这种眨眼检测的方法是快速、有效,且易于实现的.

2.1. 眼睛长宽比(EAR) 的理解

采用 OpenCV 和 dlib 可以检测到人脸特征点,定位到脸部的重要区域,如眼睛,眉毛,鼻子,嘴巴,耳朵等.

对于眨眼检测而言,只关心于人脸的两只眼睛区域.

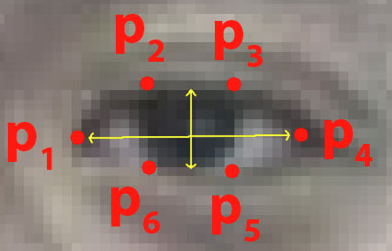

每个眼睛表示为 6 个 (x, y) 坐标,从眼睛的左角开始(正对于人脸而言),然后,顺时针到剩余部分:

眼睛的 6 个面部特征点.

基于此,可以发现眼睛特征点坐标的长和宽之间的关系.

根据 2016 年的论文 Real-Time Eye Blink Detection using Facial Landmarks,能够得到反映眼睛特征点之间关系的数学公式,即眼睛长宽比(EAR):

$$ EAR = \frac{||p_2 - p_6|| + ||p_3 - p_5||}{2||p_1 - p_4||} $$

其中,$p_1, ..., p_6$ 为面部特征点位置.

该 EAR 公式是计算垂直眼睛标志之间的距离,分母是计算水平眼睛标志之间的距离,因为水平点只有一组,但垂直点有两组,所以分母进行了加权.

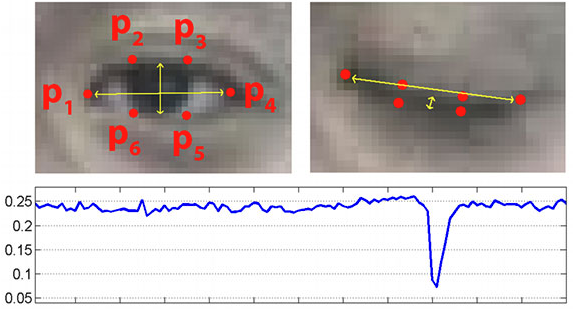

EAR 公式有趣的地方是,EAR 在眼睛张开时大致是保持恒定不变的,但在眨眼时却会发生迅速降到零的变化.

利用 EAR 公式,可以避免使用图像处理技术,只需要计算眼睛特征关键点的长宽比,就可以判断一个人是否眨眼.

更清晰的说明,如图:

图:(上左) 眼睛睁开时的特征点的可视化;(上右) 眼睛闭上时的特征点的可视化;(下) 一段时间内的眼睛长宽比情况. 明显下降的地方表示一次眨眼.

2.2. 基于人脸关键点的眨眼检测 python 实现

detect_blinks.py:

from scipy.spatial import distance as dist

from imutils.video import FileVideoStream

from imutils.video import VideoStream

from imutils import face_utils

import numpy as np

import argparse

import imutils

import time

import dlib

import cv2

# EAR 计算

def eye_aspect_ratio(eye):

# compute the euclidean distances between the two sets of

# vertical eye landmarks (x, y)-coordinates

A = dist.euclidean(eye[1], eye[5])

B = dist.euclidean(eye[2], eye[4])

# compute the euclidean distance between the horizontal

# eye landmark (x, y)-coordinates

C = dist.euclidean(eye[0], eye[3])

# compute the eye aspect ratio

ear = (A + B) / (2.0 * C)

# return the eye aspect ratio

return ear

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-p", "--shape-predictor", required=True,

help="path to facial landmark predictor")

ap.add_argument("-v", "--video", type=str, default="",

help="path to input video file")

args = vars(ap.parse_args())

# define two constants, one for the eye aspect ratio to indicate

# blink and then a second constant for the number of consecutive

# frames the eye must be below the threshold

EYE_AR_THRESH = 0.3

EYE_AR_CONSEC_FRAMES = 3

# initialize the frame counters and the total number of blinks

COUNTER = 0

TOTAL = 0

# initialize dlib's face detector (HOG-based) and then create

# the facial landmark predictor

print("[INFO] loading facial landmark predictor...")

detector = dlib.get_frontal_face_detector()

predictor = dlib.shape_predictor(args["shape_predictor"])

# grab the indexes of the facial landmarks for the left and

# right eye, respectively

(lStart, lEnd) = face_utils.FACIAL_LANDMARKS_IDXS["left_eye"]

(rStart, rEnd) = face_utils.FACIAL_LANDMARKS_IDXS["right_eye"]

# start the video stream thread

print("[INFO] starting video stream thread...")

vs = FileVideoStream(args["video"]).start()

fileStream = True

# vs = VideoStream(src=0).start()

# vs = VideoStream(usePiCamera=True).start()

# fileStream = False

time.sleep(1.0)

# loop over frames from the video stream

while True:

# if this is a file video stream, then we need to check if

# there any more frames left in the buffer to process

if fileStream and not vs.more():

break

# grab the frame from the threaded video file stream, resize

# it, and convert it to grayscale

# channels)

frame = vs.read()

frame = imutils.resize(frame, width=450)

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

# detect faces in the grayscale frame

rects = detector(gray, 0)

# loop over the face detections

for rect in rects:

# determine the facial landmarks for the face region, then

# convert the facial landmark (x, y)-coordinates to a NumPy

# array

shape = predictor(gray, rect)

shape = face_utils.shape_to_np(shape)

# extract the left and right eye coordinates, then use the

# coordinates to compute the eye aspect ratio for both eyes

leftEye = shape[lStart:lEnd]

rightEye = shape[rStart:rEnd]

leftEAR = eye_aspect_ratio(leftEye)

rightEAR = eye_aspect_ratio(rightEye)

# average the eye aspect ratio together for both eyes

ear = (leftEAR + rightEAR) / 2.0

# compute the convex hull for the left and right eye, then

# visualize each of the eyes

leftEyeHull = cv2.convexHull(leftEye)

rightEyeHull = cv2.convexHull(rightEye)

cv2.drawContours(frame, [leftEyeHull], -1, (0, 255, 0), 1)

cv2.drawContours(frame, [rightEyeHull], -1, (0, 255, 0), 1)

# check to see if the eye aspect ratio is below the blink

# threshold, and if so, increment the blink frame counter

if ear < EYE_AR_THRESH:

COUNTER += 1

# otherwise, the eye aspect ratio is not below the blink

# threshold

else:

# if the eyes were closed for a sufficient number of

# then increment the total number of blinks

if COUNTER >= EYE_AR_CONSEC_FRAMES:

TOTAL += 1

# reset the eye frame counter

COUNTER = 0

# draw the total number of blinks on the frame along with

# the computed eye aspect ratio for the frame

cv2.putText(frame, "Blinks: {}".format(TOTAL), (10, 30),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

cv2.putText(frame, "EAR: {:.2f}".format(ear), (300, 30),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

# show the frame

cv2.imshow("Frame", frame)

key = cv2.waitKey(1) & 0xFF

# if the `q` key was pressed, break from the loop

if key == ord("q"):

break

# do a bit of cleanup

cv2.destroyAllWindows()

vs.stop()运行:

# 视频输入

python detect_blinks.py \

--shape-predictor shape_predictor_68_face_landmarks.dat \

--video blink_detection_demo.mp4

# 摄像头输入

python detect_blinks.py \

--shape-predictor shape_predictor_68_face_landmarks.dat2.3. 眨眼检测器改进

这里仅关注眼睛长宽比作为量化指标,以判断人是否眨眼.

但,由于噪声、人脸特征点检测、以及视角的快速变化,采用简单的设定长宽比阈值的方法可能会出现 false-positive 检测结果,也就是检测到人眨眼了,但实际上并没有眨眼.

眨眼检测器的改进,以更鲁棒,EAR 论文作者建议:

[1] - 首先,同时计算第 N 帧和第 N-6 和 N+6 帧的眼睛长宽比,得到 13 维特征向量;

[2] - 然后,将特征向量采用 SVM 进行训练,分类.

该建议有助于减少 falase-positive 眨眼检测结果,提升整体的精度.

One comment

感谢翻译,减轻了yuedufudan