作者:Changqian Yu

这篇文章算是论坛 PyTorch Forums关于参数初始化和finetune的总结.

1. 参数初始化

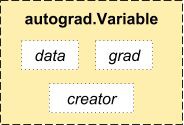

参数的初始化其实就是对参数赋值. 而待学习的参数其实都是 Variable,它其实是对 Tensor 的封装,同时提供了data,grad 等接口,这就意味着可以直接对这些参数进行操作赋值. 这就是 PyTorch 简洁高效所在.

如,卷积层的权重weight 和偏置 bias 的初始化:

import torch

import torch.nn as nn

conv1 = nn.Conv2d(3, 10, 5, stride=1, bias=True)

nn.init.xavier_uniform_(w, gain=nn.init.calculate_gain('relu'))

nn.init.constant(conv1.bias, 0.1)如下操作进行初始化方法是 PyTorch 作者所推崇的:

def weight_init(m):

# 使用isinstance来判断m属于什么类型

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

# m 中的 weight,bias 其实都是 Variable,为了能学习参数以及后向传播

m.weight.data.fill_(1)

m.bias.data.zero_()2. 模型Finetune

往往在加载了预训练模型的参数之后,需要 finetune 模型,可以使用不同的方式 finetune.

2.1 局部微调网络输出层

有时候加载训练模型后,只想调节最后的几层,其他层不训练。 其实不训练也就意味着不进行梯度计算,PyTorch 中提供的 requires_grad 使得对训练的控制变得非常简单.

在 PyTorch 中,每个 Variable数据 含有两个flag(requires_grad 和 volatile)用于指示是否计算此Variable的梯度. 设置 requires_grad = False,或者设置 volatile=True,即可指示不计算此Variable的梯度.

import torchvision.models as models

# model = models.VGG(pretrained=True)

# model = models.vgg11(pretrained=True)

# model = models.vgg16(pretrained=True)

# model = models.vgg16_bn(pretrained=True)

# model = models.ResNet(pretrained=True)

# model = models.resnet18(pretrained=True)

# model = models.resnet34(pretrained=True)

# model = models.resnet50(pretrained=True)

model = torchvision.models.resnet18(pretrained=True)

for param in model.parameters():

param.requires_grad = False

# 提取 fc 层固定的参数

fc_features = model.fc.in_features

# 替换最后的全连接层, 改为训练100类

# 新构造的模块的参数默认requires_grad为True

model.fc = nn.Linear(fc_features, 100)

# 只优化最后的分类层

optimizer = optim.SGD(model.fc.parameters(), lr=1e-2, momentum=0.9)在模型测试时,对

input_data设置volatile=True,可以节省测试时的显存 .

2.2 修改模型内部网络层

局部微调网络的输出层,仅适用于简单的修改,如果需要对网络的内部结构进行改动,则需要采用参数覆盖的方法 - 即,先定义类似网络结构,再提取预训练模型的权重参数,覆盖到自定义网络结构中,如 resnet 为例:

#! --*-- coding=UTF-8 --*--

import math

import torch

import torch.nn as nn

import torch.utils.model_zoo as model_zoo

import torchvision.models as models

class CNN(nn.Module):

def __init__(self, block, layers, num_classes=100):

self.inplanes = 64

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64,

kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1)

# 自定义添加的 ConvTranspose2d 层

self.convtranspose1 = nn.ConvTranspose2d(

2048, 2048, kernel_size=3, stride=1, padding=1,

output_padding=0, groups=1, bias=False, dilation=1)

# 自定义添加的 MaxPool2d 层

self.maxpool2 = nn.MaxPool2d(kernel_size=3, stride=1, padding=1)

# 自定义去除原来的 fc 层,添加一个 f_add 层

self.f_add = nn.Linear(2048, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

# 自定义新增网络层 forward 计算

x = self.convtranspose1(x)

x = self.maxpool2(x)

x = x.view(x.size(0), -1)

x = self.fclass(x)

return x

# 创建模型

resnet18 = models.resnet18(pretrained=True)

cnn = CNN(Bottleneck, [2, 2, 2, 2])

model_dict = cnn.state_dict()

# 加载预训练模型权重

pretrained_dict = resnet18.state_dict()

# 去除 pretrained_dict 中不在 model_dict 中的权重参数

pretrained_dict = {k: v for k, v in pretrained_dict.items() if k in model_dict}

# 更新模型参数 model_dict

model_dict.update(pretrained_dict)

# 加载 state_dict

cnn.load_state_dict(model_dict)

print(cnn)简单来说,即:

删除与当前model不匹配的key.

resnet18 = torchvision.models.resnet18(pretrained=True)

pretrained_dict = resnet18.state_dict()

model = ...

model_dict = model.state_dict()

# 1. filter out unnecessary keys

pretrained_dict = {k: v for k, v in pretrained_dict.items() if k in model_dict}

# 2. overwrite entries in the existing state dict

model_dict.update(pretrained_dict)

# 3. load the new state dict

model.load_state_dict(model_dict)

2.3 全局微调

有时候需要对全局都进行 finetune,只不过希望改换过的层和其他层的学习速率不一样,这时候可以把其他层和新层在 optimizer 中单独赋予不同的学习速率. 如:

ignored_params = list(map(id, model.fc.parameters()))

base_params = filter(lambda p: id(p) not in ignored_params,

model.parameters())

optimizer = torch.optim.SGD([

{'params': base_params},

{'params': model.fc.parameters(), 'lr': 1e-2}

], lr=1e-3, momentum=0.9)其中 base_params 使用 1e-3 来训练,model.fc.parameters 使用 1e-2 来训练,momentum 是二者共有的.