Github 项目 - tensorflow-yolov3

作者:YunYang1994

论文:yolov3

最近 YunYang1994开源的基于 TensorFlow(TF-Slim) 复现的 YOLOv3 复现,并支持自定义数据集的训练.

该开源项目组成:

- YOLO v3 网络结构

- 权重转换Weights converter (用于将加载的 COCO 权重导出为 TF checkpoint)

- 基础测试 demo

- 支持 GPU 和 CPU 版本的 NMS

- Training pipeline

- 计算 COCO mAP

1. YOLOV3 主要原理

YOLO 目标检测器基于深度卷积网络学习的特征,以检测目标物体.

正如 木盏 博文里的介绍,YOLOV3 对比 YOLOV1 和 YOLOV2,保留的部分有:

[1] - 分而治之, YOLO 系列算法是通过划分单元格进行目标检测,区别只是划分单元格的数量不同.

[2] - 激活函数采用 Leaky ReLU.

[3] - End-to-end 训练,只需一个损失函数,关注网络输入端和输出端.

[4] - YOLOV2 开始,采用 Batch Normalization 作为正则化、加速收敛和避免过拟合的方法,并将 BN 层和 Leaky ReLU 层放在每个卷积层之后.

[5] - 多尺度训练. 平衡速度和准确率,速度快,则准确率相对低;准确率高,则速度相对慢.

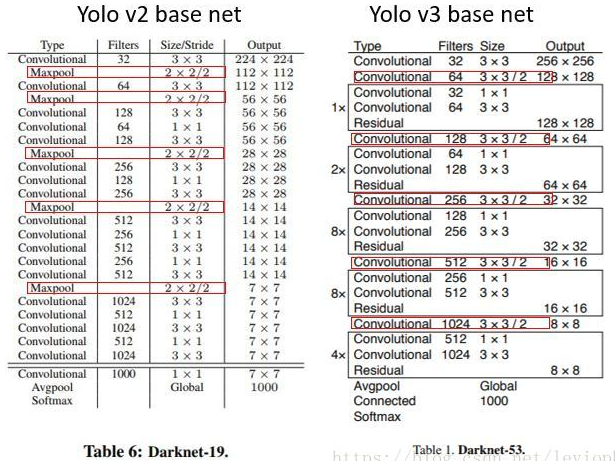

YOLO 系列算法的提升,很大一部分也决定于 backbone 网络的提升,如,YOLOV2 的 darknet-19 到 YOLOV3 的 darknet-53. YOLOV3 还提供了 tiny darknet. 速度快,则 backbone 可采用 tiny-darknet;性能好,则 backbone 可采用 darnket-53. YOLO 系列算法比较灵活,特别适合作工程算法.

1.1. 网络结构

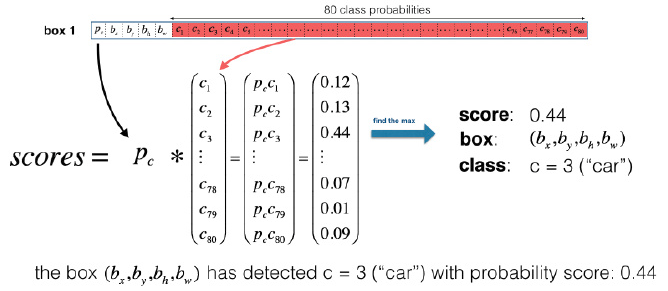

该项目里使用了预训练的网络权重,其中,共有 80 个训练的 yolo 物体类别(COCO 数据集).

记物体类别名 - coco.names 为 c,其是从 1 到 80 的整数,每个数字分别表示对应的类别名标签. 如,c=3 表示的分类物体类别为 cat.

深度卷积层学习的图像特征,送入到分类器和回归器中,以进行检测预测.(边界框坐标,对应的类别标签,等).

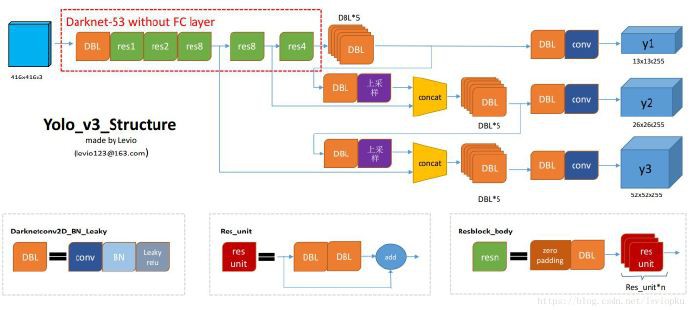

如图:

From yolo系列之yolo v3【深度解析】- 木盏 - CSDN

YOLOV3 结构中,没有 池化层 和 全连接层. 在网络的前向计算过程中,张量的尺寸变换是通过改变卷积核步长来实现的,如:stride=(2, 2),等价于将图像 width 和 height 均缩小一般(即面积缩小到原来的1/4).

而,YOLOV2 中,要进行 5 次张量尺寸的缩小(MaxPool),特征图会缩小到原输入尺寸的 在yolo_v2中,要经历5次缩小,会将特征图缩小到原输入尺寸的 $1/2^5$,即1/32. 例如,输入图像尺寸为 416x416,则输出特征图尺寸为 13x13 (416/32=13).

YOLOV3 类似于 YOLOV2,backbone 网络会将输出特征图缩小到输入图片的 1/32. 因此,要求输入图片的尺寸为 32 的倍数.

- DBL: YOLOV3 的基本组件,对应于代码中的Darknetconv2d_BN_Leaky,即:卷积+BN+Leaky relu. YOLOV3 中 BN 和 Leaky ReLU 和卷积层是不可分类的部分(除了最后一层卷积),共同构成了最小组件.

- resn:

n代表数字,表示 res_block 里有多少个 res_unit,如 res1,res2, … , res8 等. YOLOV3 借鉴了 ResNet 的残差结构,可以使得网络更深. - concat:张量拼接操作. 将 darknet 中间层和后面的某一层的上采样进行拼接. 拼接操作和残差层 add 操作是不一样的,拼接会扩充张量的维度,而 add 只是直接相加不会导致张量维度的改变.

Darknet-19 vs Darknet-53 网络层结构 (From yolo系列之yolo v3【深度解析】- 木盏 - CSDN)

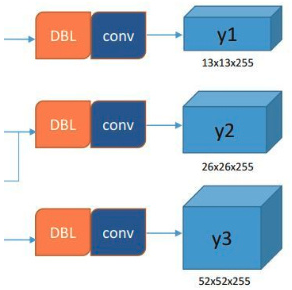

YOLOV3 输出了三个不同尺度的特征图 - y1, y2, y3,如图:

这种多尺度预测方式,借鉴了 FPN(Feature pyramid networks),对不同尺寸的目标进行预测,越精细的单元网格( grid cell) 可以检测出越精细的物体.

YOLOV3 设定每个网格单元输出 3 个矩形框box 的预测,每个 box 需要五个参数(x, y, w, h, confidence),再对应 80 个类别的概率,则可得到 3*(5 + 80) = 255.

From YOLOv3代码分析(Keras+Tensorflow)

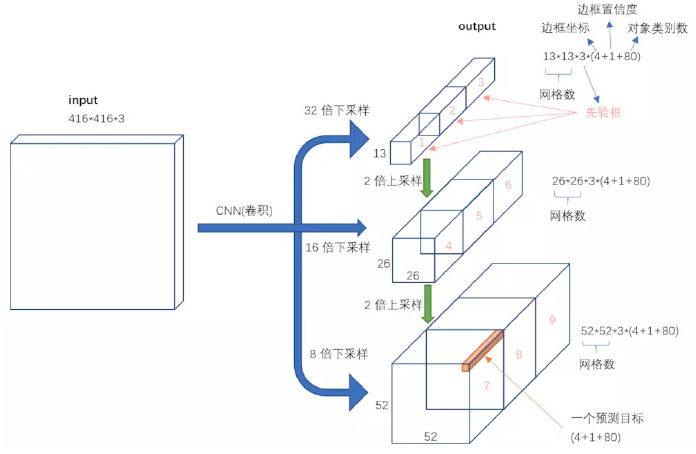

1.2. 网络输入与输出

[1] - 网络输入:[None, 416, 416, 3]

[2] - 网络输出:矩形框中物体的置信度,矩形框位置的列表,检测到的物体类别名. 每个矩形框表示为 6 个数:(Rx, Ry, Rh, Rw, Pc, C1,...Cn). 其中,n=80,即 c 是 80 维向量. 矩形框最终的向量大小为 5 + 80=85. 如图:

图中第一个数字 Pc 为物体的置信;第二个到第四个数字数字 bx, by, bh, bw 表示矩形框坐标信息;最后的 80 个数字中每个分别表示对应于类别的输出概率.

1.3. 设置 score 阈值过滤边界框

输出结果可能包含多个矩形框,可能是 false positive 结果,或者重叠情况. 如,输入图像尺寸为 [416, 416, 3],YOLOV3 总共采用 9 个 anchor boxes(每个尺寸对应 3 个anchor boxes),则可以得到 (52x52 + 26x26 + 13x13)x3=10647 个矩形框.

因此,需要减少输出结果中的矩形框数量,比如,通过设置 score 阈值.

输入参数:

boxes: tensor of shape [10647, 4)]scores: tensor of shape[10647, 80]containing the detection scores for 80 classes.score_thresh: float value , fliter boxes with low score

如:

# Step 1: Create a filtering mask based on "box_class_scores" by using "threshold".

score_thresh=0.4

mask = tf.greater_equal(scores, tf.constant(score_thresh))1.4. NMS 处理

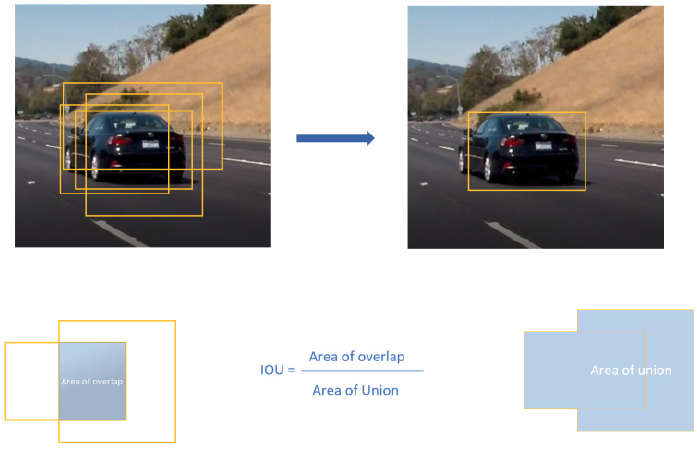

设置 score 阈值过滤预测的矩形框后,还是可能有大量的重叠矩形框. 进一步的操作是,采用 NMS(non-maximum suppression) 算法.

- Discard all boxes with

Pc <= 0.4 While there are any remaining boxes :

- Pick the box with the largest

Pc - Output that as a prediction

- Discard any remaining boxes with

IOU>=0.5with the box output in the previous step

- Pick the box with the largest

如:

for i in range(num_classes):

tf.image.non_max_suppression(boxes, score[:,i], iou_threshold=0.5) NMS 采用了 IoU(Intersection over Union) 函数. NMS 例示如图:NMS 的输入是 4 个重叠的矩形框,输出是只有一个矩形框.

1.5. 相关材料

[1] - Implementing YOLO v3 in Tensorflow (TF-Slim)

[2] - Object Detection using YOLOv2 on Pascal VOC2012

[3] - Understanding YOLO

[4] - YOLOv3目标检测有了TensorFlow实现,可用自己的数据来训练

[5] - 学员分享 | 小哥哥和用YOLOv3做目标检测的故事「文末送课」

[6] - 目标检测|YOLOv2原理与实现(附YOLOv3)

[7] - YOLOv2は、2016年12月25日時点の、速度、精度ともに世界最高のリアルタイム物体検出手法です

2. YOLOV3 项目入手

[1] - 下载项目:

git clone https://github.com/YunYang1994/tensorflow-yolov3.git[2] - 安装项目依赖项:

cd tensorflow-yolov3

pip3 install -r ./docs/requirements.txtrequirements.txt:

numpy==1.15.1

Pillow==5.3.0

scipy==1.1.0

tensorflow-gpu==1.11.0

wget==3.2[3] - 将加载的 COCO 权重导出为 TF Checkpoint - yolov3.ckpt 和 frozen graph - yolov3_gpu_nms.pb.

下载 yolov3.weight,并放到 ./checkpoint/ 路径:

wget https://github.com/YunYang1994/tensorflow-yolov3/releases/download/v1.0/yolov3.weights导出权重:

python3 convert_weight.py --convert --freeze[4] - 利用路径 ./checkpoint/ 中的 .pb 文件,运行测试 demo:

python3 nms_demo.py

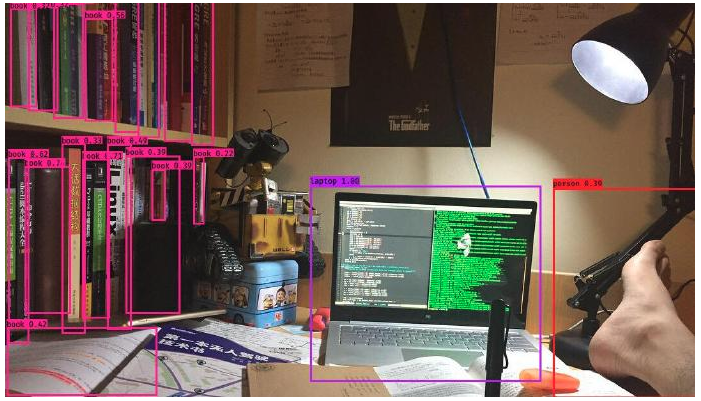

python3 video_demo.py # if use camera, set video_path = 0如:

3. YOLOV3 训练

3.1. 下载预训练权重文件

YOLOV3 使用在Imagenet上预训练好的模型参数(文件名称: darknet53.conv.74,大小76MB)基础上继续训练.

darknet53.conv.74下载链接: https://pjreddie.com/media/files/darknet53.conv.74.

wget https://pjreddie.com/media/files/darknet53.conv.743.2. 快速入手训练

这里给出 YOLOV3 训练过程的简单示例.

采用 python3 core/convert_tfrecord.py 将图片数据集转换为 tfrecords 文件.

python3 core/convert_tfrecord.py \

--dataset /data/train_data/quick_train_data/quick_train_data.txt \

--tfrecord_path_prefix /data/train_data/quick_train_data/tfrecords/quick_train_data

python3 quick_train.py # start training3.3. 训练 COCO 数据集

[1] - 首先,需要下载 COCO2017 数据集,并放到路径 ./data/train_data/COCO 中.

cd data/train_data/COCO

wget http://images.cocodataset.org/zips/train2017.zip

unzip train2017.zip

wget http://images.cocodataset.org/annotations/annotations_trainval2017.zip

unzip annotations_trainval2017.zip[2] - 提取 COCO 数据集中的一些有用信息,如边界框(bounding box), category id 等,并生成 .txt 文件.

python3 core/extract_coco.py --dataset_info_path ./data/train_data/COCO/train2017.txt即可得到 ./data/train_data/COCO/train2017.txt. 每一行为一个样本,如:

/path/to/data/train_data/train2017/000000458533.jpg 20 18.19 6.32 424.13 421.83 20 323.86 2.65 640.0 421.94

/path/to/data/train_data/train2017/000000514915.jpg 16 55.38 132.63 519.84 380.4

# image_path, category_id, x_min, y_min, x_max, y_max, category_id, x_min, y_min, ...[3] - 接着,将图像数据集转换为 .tfrecord 数据集,以二进制文件的方式存储数据. 之后,即可进行模型训练.

python3 core/convert_tfrecord.py \

--dataset ./data/train_data/COCO/train2017.txt \

--tfrecord_path_prefix ./data/train_data/COCO/tfrecords/coco \

--num_tfrecords 100

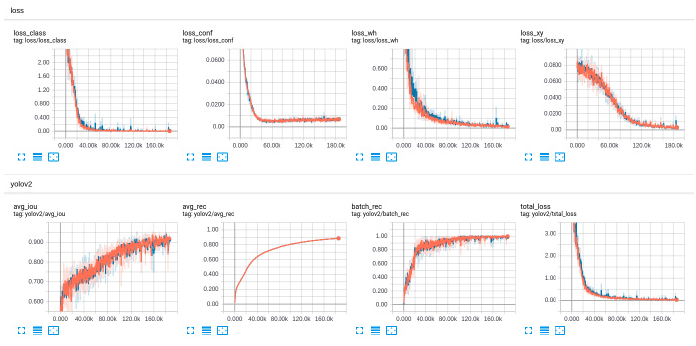

python3 train.py以 YOLOV2 的训练过程为例:

3.4. 在 COCO 数据集上的评估验证(待续)

cd data/train_data/COCO

wget http://images.cocodataset.org/zips/test2017.zip

wget http://images.cocodataset.org/annotations/image_info_test2017.zip

unzip test2017.zip

unzip image_info_test2017.zip4. YOLOV3 模型定义

#! /usr/bin/env python3

# coding=utf-8

#================================================================

# Copyright (C) 2018 * Ltd. All rights reserved.

#

# Editor : VIM

# File name : common.py

# Author : YunYang1994

# Created date: 2018-11-20 10:22:32

# Description : some basical layer for daraknet53 and yolov3

#

#================================================================

import tensorflow as tf

slim = tf.contrib.slim

def _conv2d_fixed_padding(inputs, filters, kernel_size, strides=1):

if strides > 1: inputs = _fixed_padding(inputs, kernel_size)

inputs = slim.conv2d(inputs, filters, kernel_size, stride=strides,

padding=('SAME' if strides == 1 else 'VALID'))

return inputs

@tf.contrib.framework.add_arg_scope

def _fixed_padding(inputs, kernel_size, *args, mode='CONSTANT', **kwargs):

"""

Pads the input along the spatial dimensions independently of input size.

Args:

inputs: A tensor of size [batch, channels, height_in, width_in] or

[batch, height_in, width_in, channels] depending on data_format.

kernel_size: The kernel to be used in the conv2d or max_pool2d operation.

Should be a positive integer.

mode: The mode for tf.pad.

Returns:

A tensor with the same format as the input with the data either intact

(if kernel_size == 1) or padded (if kernel_size > 1).

"""

pad_total = kernel_size - 1

pad_beg = pad_total // 2

pad_end = pad_total - pad_beg

padded_inputs = tf.pad(inputs, [[0, 0], [pad_beg, pad_end],

[pad_beg, pad_end], [0, 0]],

mode=mode)

return padded_inputs#! /usr/bin/env python3

# coding=utf-8

#================================================================

# Copyright (C) 2018 * Ltd. All rights reserved.

#

# Editor : VIM

# File name : yolov3.py

# Author : YunYang1994

# Created date: 2018-11-21 18:41:35

# Description : YOLOv3: An Incremental Improvement

#

#================================================================

import numpy as np

import tensorflow as tf

from core import common, utils

slim = tf.contrib.slim

class darknet53(object):

"""network for performing feature extraction"""

def __init__(self, inputs):

self.outputs = self.forward(inputs)

def _darknet53_block(self, inputs, filters):

"""

implement residuals block in darknet53

"""

shortcut = inputs

inputs = common._conv2d_fixed_padding(inputs, filters * 1, 1)

inputs = common._conv2d_fixed_padding(inputs, filters * 2, 3)

inputs = inputs + shortcut

return inputs

def forward(self, inputs):

inputs = common._conv2d_fixed_padding(inputs, 32, 3, strides=1)

inputs = common._conv2d_fixed_padding(inputs, 64, 3, strides=2)

inputs = self._darknet53_block(inputs, 32)

inputs = common._conv2d_fixed_padding(inputs, 128, 3, strides=2)

for i in range(2):

inputs = self._darknet53_block(inputs, 64)

inputs = common._conv2d_fixed_padding(inputs, 256, 3, strides=2)

for i in range(8):

inputs = self._darknet53_block(inputs, 128)

route_1 = inputs

inputs = common._conv2d_fixed_padding(inputs, 512, 3, strides=2)

for i in range(8):

inputs = self._darknet53_block(inputs, 256)

route_2 = inputs

inputs = common._conv2d_fixed_padding(inputs, 1024, 3, strides=2)

for i in range(4):

inputs = self._darknet53_block(inputs, 512)

return route_1, route_2, inputs

class yolov3(object):

def __init__(self, num_classes=80,

batch_norm_decay=0.9,

leaky_relu=0.1,

anchors_path='./data/yolo_anchors.txt'):

# self._ANCHORS = [[10 ,13], [16 , 30], [33 , 23],

# [30 ,61], [62 , 45], [59 ,119],

# [116,90], [156,198], [373,326]]

self._ANCHORS = utils.get_anchors(anchors_path)

self._BATCH_NORM_DECAY = batch_norm_decay

self._LEAKY_RELU = leaky_relu

self._NUM_CLASSES = num_classes

self.feature_maps = [] # [[None, 13, 13, 255],

# [None, 26, 26, 255],

# [None, 52, 52, 255]]

def _yolo_block(self, inputs, filters):

inputs = common._conv2d_fixed_padding(inputs, filters * 1, 1)

inputs = common._conv2d_fixed_padding(inputs, filters * 2, 3)

inputs = common._conv2d_fixed_padding(inputs, filters * 1, 1)

inputs = common._conv2d_fixed_padding(inputs, filters * 2, 3)

inputs = common._conv2d_fixed_padding(inputs, filters * 1, 1)

route = inputs

inputs = common._conv2d_fixed_padding(inputs, filters * 2, 3)

return route, inputs

def _detection_layer(self, inputs, anchors):

num_anchors = len(anchors)

feature_map = slim.conv2d(inputs,

num_anchors * (5 + self._NUM_CLASSES),

1,

stride=1,

normalizer_fn=None,

activation_fn=None,

biases_initializer=tf.zeros_initializer())

return feature_map

def _reorg_layer(self, feature_map, anchors):

num_anchors = len(anchors) # num_anchors=3

grid_size = feature_map.shape.as_list()[1:3]

stride = tf.cast(self.img_size // grid_size, tf.float32)

feature_map = tf.reshape(feature_map,

[-1, grid_size[0], grid_size[1],

num_anchors, 5 + self._NUM_CLASSES])

box_centers, box_sizes, conf_logits, prob_logits = tf.split(

feature_map, [2, 2, 1, self._NUM_CLASSES], axis=-1)

box_centers = tf.nn.sigmoid(box_centers)

grid_x = tf.range(grid_size[0], dtype=tf.int32)

grid_y = tf.range(grid_size[1], dtype=tf.int32)

a, b = tf.meshgrid(grid_x, grid_y)

x_offset = tf.reshape(a, (-1, 1))

y_offset = tf.reshape(b, (-1, 1))

x_y_offset = tf.concat([x_offset, y_offset], axis=-1)

x_y_offset = tf.reshape(x_y_offset, [grid_size[0], grid_size[1], 1, 2])

x_y_offset = tf.cast(x_y_offset, tf.float32)

box_centers = box_centers + x_y_offset

box_centers = box_centers * stride

box_sizes = tf.exp(box_sizes) * anchors

boxes = tf.concat([box_centers, box_sizes], axis=-1)

return x_y_offset, boxes, conf_logits, prob_logits

@staticmethod

def _upsample(inputs, out_shape):

new_height, new_width = out_shape[1], out_shape[2]

inputs = tf.image.resize_nearest_neighbor(inputs, (new_height, new_width))

inputs = tf.identity(inputs, name='upsampled')

return inputs

# @staticmethod

# def _upsample(inputs, out_shape):

# """

# replace resize_nearest_neighbor with conv2d_transpose To support TensorRT 5 optimization

# """

# new_height, new_width = out_shape[1], out_shape[2]

# filters = 256 if (new_height == 26 and new_width==26) else 128

# inputs = tf.layers.conv2d_transpose(inputs,

# filters,

# k

# ernel_size=3,

# padding='same',

# strides=(2,2),

# kernel_initializer=tf.ones_initializer())

# return inputs

def forward(self, inputs, is_training=False, reuse=False):

"""

Creates YOLO v3 model.

:param inputs: a 4-D tensor of size [batch_size, height, width, channels].

Dimension batch_size may be undefined. The channel order is RGB.

:param is_training: whether is training or not.

:param reuse: whether or not the network and its variables should be reused.

:return:

"""

# it will be needed later on

self.img_size = tf.shape(inputs)[1:3]

# set batch norm params

batch_norm_params = {

'decay': self._BATCH_NORM_DECAY,

'epsilon': 1e-05,

'scale': True,

'is_training': is_training,

'fused': None, # Use fused batch norm if possible.

}

# Set activation_fn and parameters for conv2d, batch_norm.

with slim.arg_scope([slim.conv2d, slim.batch_norm, common._fixed_padding],

reuse=reuse):

with slim.arg_scope([slim.conv2d], normalizer_fn=slim.batch_norm,

normalizer_params=batch_norm_params,

biases_initializer=None,

activation_fn=lambda x: tf.nn.leaky_relu(x, alpha=self._LEAKY_RELU)):

with tf.variable_scope('darknet-53'):

route_1, route_2, inputs = darknet53(inputs).outputs

with tf.variable_scope('yolo-v3'):

route, inputs = self._yolo_block(inputs, 512)

feature_map_1 = self._detection_layer(inputs, self._ANCHORS[6:9])

feature_map_1 = tf.identity(feature_map_1, name='feature_map_1')

inputs = common._conv2d_fixed_padding(route, 256, 1)

upsample_size = route_2.get_shape().as_list()

inputs = self._upsample(inputs, upsample_size)

inputs = tf.concat([inputs, route_2], axis=3)

route, inputs = self._yolo_block(inputs, 256)

feature_map_2 = self._detection_layer(inputs, self._ANCHORS[3:6])

feature_map_2 = tf.identity(feature_map_2, name='feature_map_2')

inputs = common._conv2d_fixed_padding(route, 128, 1)

upsample_size = route_1.get_shape().as_list()

inputs = self._upsample(inputs, upsample_size)

inputs = tf.concat([inputs, route_1], axis=3)

route, inputs = self._yolo_block(inputs, 128)

feature_map_3 = self._detection_layer(inputs, self._ANCHORS[0:3])

feature_map_3 = tf.identity(feature_map_3, name='feature_map_3')

return feature_map_1, feature_map_2, feature_map_3

def _reshape(self, x_y_offset, boxes, confs, probs):

grid_size = x_y_offset.shape.as_list()[:2]

boxes = tf.reshape(boxes, [-1, grid_size[0]*grid_size[1]*3, 4])

confs = tf.reshape(confs, [-1, grid_size[0]*grid_size[1]*3, 1])

probs = tf.reshape(probs, [-1, grid_size[0]*grid_size[1]*3, self._NUM_CLASSES])

return boxes, confs, probs

def predict(self, feature_maps):

"""

Note: given by feature_maps, compute the receptive field

and get boxes, confs and class_probs

input_argument: feature_maps -> [None, 13, 13, 255],

[None, 26, 26, 255],

[None, 52, 52, 255],

"""

feature_map_1, feature_map_2, feature_map_3 = feature_maps

feature_map_anchors = [(feature_map_1, self._ANCHORS[6:9]),

(feature_map_2, self._ANCHORS[3:6]),

(feature_map_3, self._ANCHORS[0:3]),]

results = [self._reorg_layer(feature_map, anchors) for (feature_map, anchors) in feature_map_anchors]

boxes_list, confs_list, probs_list = [], [], []

for result in results:

boxes, conf_logits, prob_logits = self._reshape(*result)

confs = tf.sigmoid(conf_logits)

probs = tf.sigmoid(prob_logits)

boxes_list.append(boxes)

confs_list.append(confs)

probs_list.append(probs)

boxes = tf.concat(boxes_list, axis=1)

confs = tf.concat(confs_list, axis=1)

probs = tf.concat(probs_list, axis=1)

center_x, center_y, height, width = tf.split(boxes, [1,1,1,1], axis=-1)

x0 = center_x - height / 2

y0 = center_y - width / 2

x1 = center_x + height / 2

y1 = center_y + width / 2

boxes = tf.concat([x0, y0, x1, y1], axis=-1)

return boxes, confs, probs

def compute_loss(self, y_pred, y_true, ignore_thresh=0.5, max_box_per_image=8):

"""

Note: compute the loss

Arguments: y_pred, list -> [feature_map_1, feature_map_2, feature_map_3]

the shape of [None, 13, 13, 3*85]. etc

"""

loss_xy, loss_wh, loss_conf, loss_class = 0., 0., 0., 0.

total_loss, rec_50, rec_75, avg_iou = 0., 0., 0., 0.

_ANCHORS = [self._ANCHORS[6:9], self._ANCHORS[3:6], self._ANCHORS[0:3]]

for i in range(len( y_pred )):

result = self.loss_layer(y_pred[i], y_true[i], _ANCHORS[i], ignore_thresh, max_box_per_image)

loss_xy += result[0]

loss_wh += result[1]

loss_conf += result[2]

loss_class += result[3]

rec_50 += result[4]

rec_75 += result[5]

avg_iou += result[6]

total_loss = loss_xy + loss_wh + loss_conf + loss_class

return [total_loss, loss_xy, loss_wh, loss_conf, loss_class, rec_50, rec_75, avg_iou]

def loss_layer(self, feature_map_i, y_true, anchors, ignore_thresh, max_box_per_image):

NO_OBJECT_SCALE = 1.0

OBJECT_SCALE = 5.0

COORD_SCALE = 1.0

CLASS_SCALE = 1.0

grid_size = tf.shape(feature_map_i)[1:3] # [13, 13]

stride = tf.cast(self.img_size//grid_size, dtype=tf.float32) # [32, 32]

pred_result = self._reorg_layer(feature_map_i, anchors)

xy_offset, pred_boxes, pred_box_conf_logits, pred_box_class_logits = pred_result

# (13, 13, 1, 2), (1, 13, 13, 3, 4), (1, 13, 13, 3, 1), (1, 13, 13, 3, 80)

# pred_boxes 前面两个坐标是左上角,后面两个是右下角

"""

Adjust prediction

"""

pred_box_conf = tf.nn.sigmoid(pred_box_conf_logits) # adjust confidence

pred_box_class = tf.argmax(tf.nn.softmax(pred_box_class_logits), -1) # adjust class probabilities

pred_box_xy = (pred_boxes[..., 0:2] + pred_boxes[..., 2:4]) / 2. # absolute coordinate

pred_box_wh = pred_boxes[..., 2:4] - pred_boxes[..., 0:2] # absolute size

# 每个cell里都会预测一个boundingbox,y_true里面每个cell里也对应一个

# boundingbox,那么怎么计算iou呢?

"""

Adjust ground truth

"""

true_box_class = tf.argmax(y_true[..., 5:], -1)

true_box_conf = y_true[..., 4:5]

true_box_xy = y_true[..., 0:2] # absolute coordinate

true_box_wh = y_true[..., 2:4] # absolute size

object_mask = y_true[..., 4:5]

# initially, drag all objectness of all boxes to 0

conf_delta = pred_box_conf - 0

"""

Compute some online statistics

"""

true_mins = true_box_xy - true_box_wh / 2.

true_maxs = true_box_xy + true_box_wh / 2.

pred_mins = pred_box_xy - pred_box_wh / 2.

pred_maxs = pred_box_xy + pred_box_wh / 2.

intersect_mins = tf.maximum(pred_mins, true_mins)

intersect_maxs = tf.minimum(pred_maxs, true_maxs)

intersect_wh = tf.maximum(intersect_maxs - intersect_mins, 0.)

intersect_area = intersect_wh[..., 0] * intersect_wh[..., 1]

true_area = true_box_wh[..., 0] * true_box_wh[..., 1]

pred_area = pred_box_wh[..., 0] * pred_box_wh[..., 1]

union_area = pred_area + true_area - intersect_area

iou_scores = tf.truediv(intersect_area, union_area)

return object_mask, intersect_area, iou_scores

iou_scores = object_mask * tf.expand_dims(iou_scores, 4)

count = tf.reduce_sum(object_mask)

detect_mask = tf.to_float((pred_box_conf*object_mask) >= 0.5)

class_mask = tf.expand_dims(tf.to_float(tf.equal(pred_box_class, true_box_class)), 4)

recall50 = tf.reduce_mean(tf.to_float(iou_scores >= 0.5 ) * detect_mask * class_mask) / (count + 1e-3)

recall75 = tf.reduce_mean(tf.to_float(iou_scores >= 0.75) * detect_mask * class_mask) / (count + 1e-3)

avg_iou = tf.reduce_mean(iou_scores) / (count + 1e-3)

"""

Compare each predicted box to all true boxes

"""

def pick_out_gt_box(y_true):

y_true = y_true.copy()

bs = y_true.shape[0]

# print("=>y_true", y_true.shape)

true_boxes_batch = np.zeros([bs, 1, 1, 1, max_box_per_image, 4], dtype=np.float32)

# print("=>true_boxes_batch", true_boxes_batch.shape)

for i in range(bs):

y_true_per_layer = y_true[i]

true_boxes_per_layer = y_true_per_layer[y_true_per_layer[..., 4] > 0][:, 0:4]

if len(true_boxes_per_layer) == 0: continue

true_boxes_batch[i][0][0][0][0:len(true_boxes_per_layer)] = true_boxes_per_layer

return true_boxes_batch

true_boxes = tf.py_func(pick_out_gt_box, [y_true], [tf.float32] )[0]

true_xy = true_boxes[..., 0:2] # absolute location

true_wh = true_boxes[..., 2:4] # absolute size

true_mins = true_xy - true_wh / 2.

true_maxs = true_xy + true_wh / 2.

pred_mins = tf.expand_dims(pred_boxes[..., 0:2], axis=4)

pred_maxs = tf.expand_dims(pred_boxes[..., 2:4], axis=4)

pred_wh = pred_maxs - pred_mins

intersect_mins = tf.maximum(pred_mins, true_mins)

intersect_maxs = tf.minimum(pred_maxs, true_maxs)

intersect_wh = tf.maximum(intersect_maxs - intersect_mins, 0.)

intersect_area = intersect_wh[..., 0] * intersect_wh[..., 1]

true_area = true_wh[..., 0] * true_wh[..., 1]

pred_area = pred_wh[..., 0] * pred_wh[..., 1]

union_area = pred_area + true_area - intersect_area

iou_scores = tf.truediv(intersect_area, union_area)

best_ious = tf.reduce_max(iou_scores, axis=4)

conf_delta *= tf.expand_dims(tf.to_float(best_ious < ignore_thresh), 4)

"""

Compare each true box to all anchor boxes

"""

### adjust x and y => relative position to the containing cell

true_box_xy = true_box_xy / stride - xy_offset # t_xy in `sigma(t_xy) + c_xy`

pred_box_xy = pred_box_xy / stride - xy_offset

### adjust w and h => relative size to the containing cell

true_box_wh_logit = true_box_wh / anchors

pred_box_wh_logit = pred_box_wh / anchors

true_box_wh_logit = tf.where(condition=tf.equal(true_box_wh_logit,0),

x=tf.ones_like(true_box_wh_logit), y=true_box_wh_logit)

pred_box_wh_logit = tf.where(condition=tf.equal(pred_box_wh_logit,0),

x=tf.ones_like(pred_box_wh_logit), y=pred_box_wh_logit)

true_box_wh = tf.log(true_box_wh_logit) # t_wh in `p_wh*exp(t_wh)`

pred_box_wh = tf.log(pred_box_wh_logit)

wh_scale = tf.exp(true_box_wh) * anchors / tf.to_float(self.img_size)

wh_scale = tf.expand_dims(2 - wh_scale[..., 0] * wh_scale[..., 1], axis=4) # the smaller the box, the bigger the scale

xy_delta = object_mask * (pred_box_xy-true_box_xy) * wh_scale * COORD_SCALE

wh_delta = object_mask * (pred_box_wh-true_box_wh) * wh_scale * COORD_SCALE

conf_delta = object_mask * (pred_box_conf-true_box_conf) * OBJECT_SCALE + (1-object_mask) * conf_delta * NO_OBJECT_SCALE

class_delta = object_mask * \

tf.expand_dims(tf.nn.sparse_softmax_cross_entropy_with_logits(

labels=true_box_class,

logits=pred_box_class_logits),

4) * CLASS_SCALE

loss_xy = tf.reduce_mean(tf.square(xy_delta))

loss_wh = tf.reduce_mean(tf.square(wh_delta))

loss_conf = tf.reduce_mean(tf.square(conf_delta))

loss_class = tf.reduce_mean(class_delta)

return loss_xy, loss_wh, loss_conf, loss_class, recall50, recall75, avg_iou